Introduction: The Dilemma of the AI Era

As generative AI adoption in business advances, many organizations face the same dilemma:

- The business wants to push forward with AI adoption

- The field demands speed and flexibility

At the same time, human rights, privacy, and safety cannot be neglected. Yet translating these principles into “implementable form” in the field is far from easy.

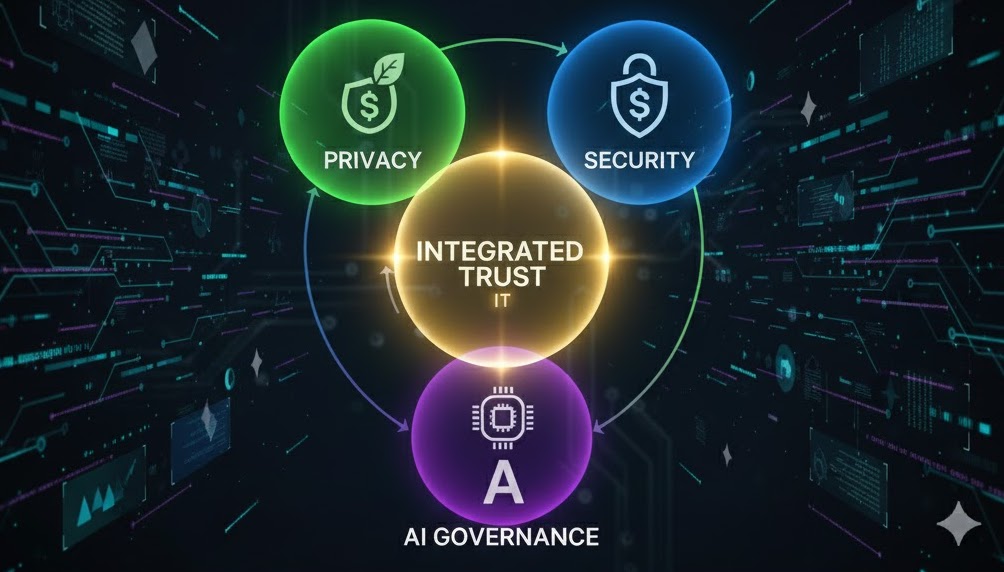

This article examines how to reconcile the gap between principles and reality from the perspective of the SPA Integrated Trust Triad (SPA-IT) — an integrated approach that unifies Security, Privacy, and AI Governance with cross-domain practical effectiveness.

1. AI Principles Are “Correct” — But They Don’t Move on Their Own

The OECD AI Principles are critically important:

- Innovation

- Respect for human rights

- Safety and robustness

- Accountability

None can be denied. Meanwhile, the field raises these concerns:

- “We still don’t know what we’re supposed to actually do”

- “If we check everything, development stops”

- “We can’t judge what’s ‘enough’”

Principles show the destination, but they don’t provide the route (design and operations).

2. Security Alone, Privacy Alone, AI Governance Alone, or Development Experience Alone — None Suffice

AI-era problems cannot be solved through single-domain optimization. Making things work in the field requires redesigning according to the organization and use case. For example: shadow AI inventory → risk classification → exception design → logs/audit trails and responsibility boundaries — restructured to operate within the development process.

Consider your own position. Depending on where your expertise lies, the field tends toward:

- Security perspective only → “Ban everything” becomes the optimal answer

- Privacy perspective only → Doesn’t work in practice

- Governance perspective only → Ends in abstraction

- Development perspective only → Risks become invisible

- User perspective only → Convenience wins; rules get circumvented

What’s needed is design that understands each domain’s logic and finds the balance. This is a domain that can only be mastered through practice, not theory.

3. Not “Don’t Let Them Use It” — Create a “State Where They Can Judge”

The common thread in AI governance that works in practice is, when abstracted, surprisingly simple:

- What’s OK and what’s not

- Where does it require judgment

- Who judges, and who is responsible

These must be defined in advance. Neither blanket prohibition nor leaving it entirely to the field — comprehensive measures that leave room for judgment are needed.

4. Innovation and Safety Are Not a Trade-off

In AI adoption, there appear to be moments where you must choose between innovation and safety/human rights. In practice, however, coexistence is possible through integrated design:

- Clarify the points where human intervention is needed

- Decide judgment and responsibility ownership in advance

- Ensure logs (audit trails) and explainability

These are not conditions for stopping innovation — they are prerequisites for pressing the accelerator with confidence.

5. Checkpoint Perspectives for Measuring Implementability (Excerpts)

Based on the SPA-IT perspective, these are the areas that most commonly become bottlenecks in practice. These are not specific organizational cases but generalized pain points common across multiple field experiences.

In many organizations, operations begin with “exception handling,” “audit trails,” and “responsibility boundaries” left unstructured — resulting in shadow AI persisting. Therefore, designing inventory and classification, exception operations, and log/audit mechanisms as a continuous whole determines implementability.

- Is the nature of input data organized?

- Where are AI judgment results handed off to humans?

- When unexpected behavior occurs, are there means to stop it?

- In the event of an incident, is the organization in a state to fulfill accountability?

These are not about the type of tool but about “how it’s used” and “operations.”

Conclusion

AI principles exist to protect human rights and advance adoption. Achieving both in the field requires understanding the principles, the technology, and the organization’s reality.

As a starting point for that perspective, we have published an AI Governance / Safety Checklist (Preview edition).

References:

Disclaimer: This article represents the personal views of the author based on information available as of December 2025, and does not represent the views of any affiliated or related organization.